Meta

Prototyping generative AI/ML experiences

One of my key focuses at Meta is concepting and prototyping new experiences with emerging ML/AI capabilities, particularly diffusion models. I have developed an approach for bringing live models into high-fidelity design prototypes using internally hosted APIs and 3rd parties like Replicate.

Using this approach, our Instagram and Facebook teams are able to evaluate and tune model behavior before we invest engineering effort to fully realize. Unfortunately, I’m not able to share specific projects due to NDA.

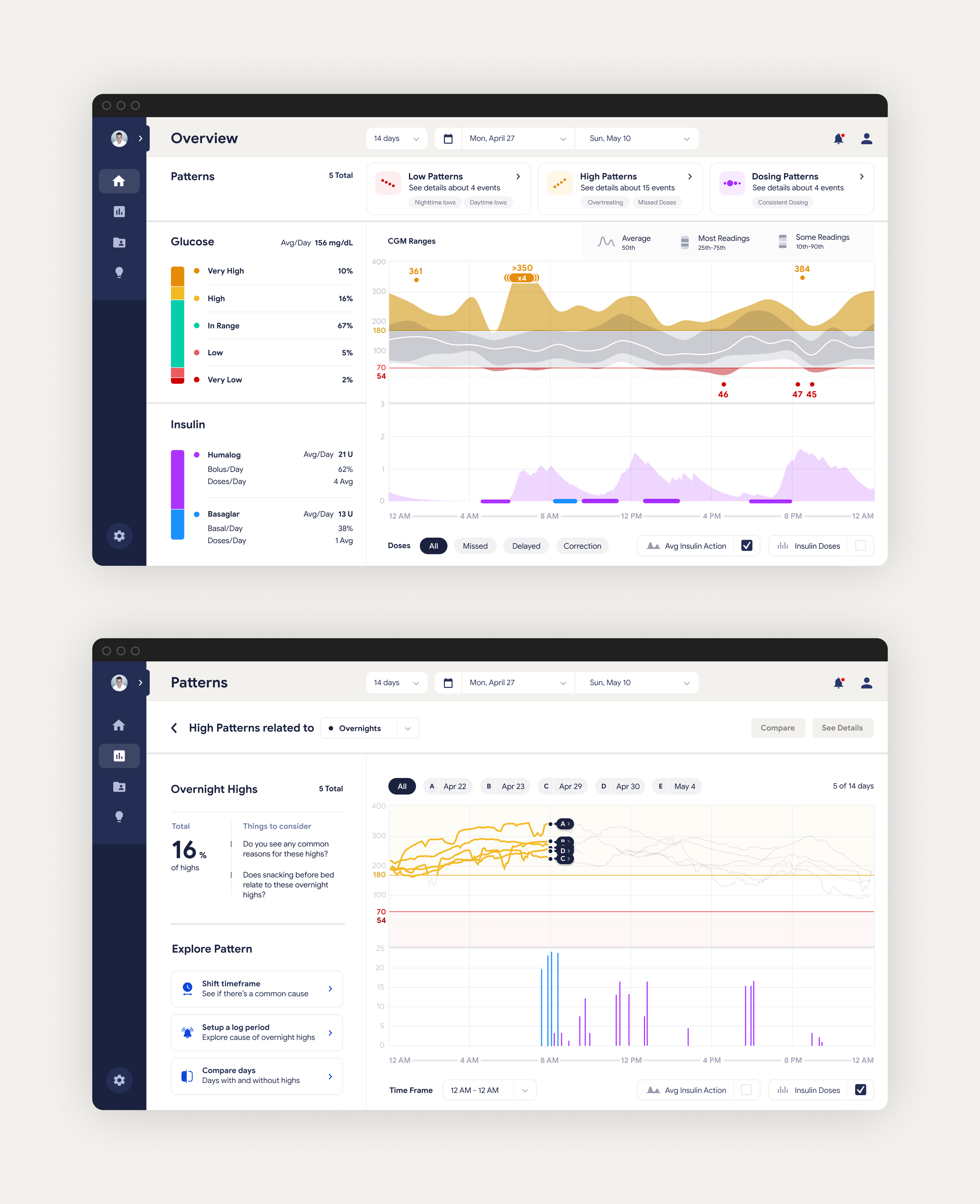

Eli Lilly

Visualizing patterns in diabetes data

Eli Lilly's Medical Innovation Group invited our team at Healthmade Design to create tools that would help people with Type 2 diabetes and their doctors see insights in insulin data.

Detailed data around dosing was not previously available, so we initially focused on a framework for visualizing patterns in insulin data. In order to do this, we developed a workflow with Lilly data scientists that allowed us to design with real patient data in Figma.

After aligning on a visualization standard with healthcare providers, we designed desktop and mobile applications that enable doctors and patients to incorporate dosing and glucose patterns into clinical treatment.

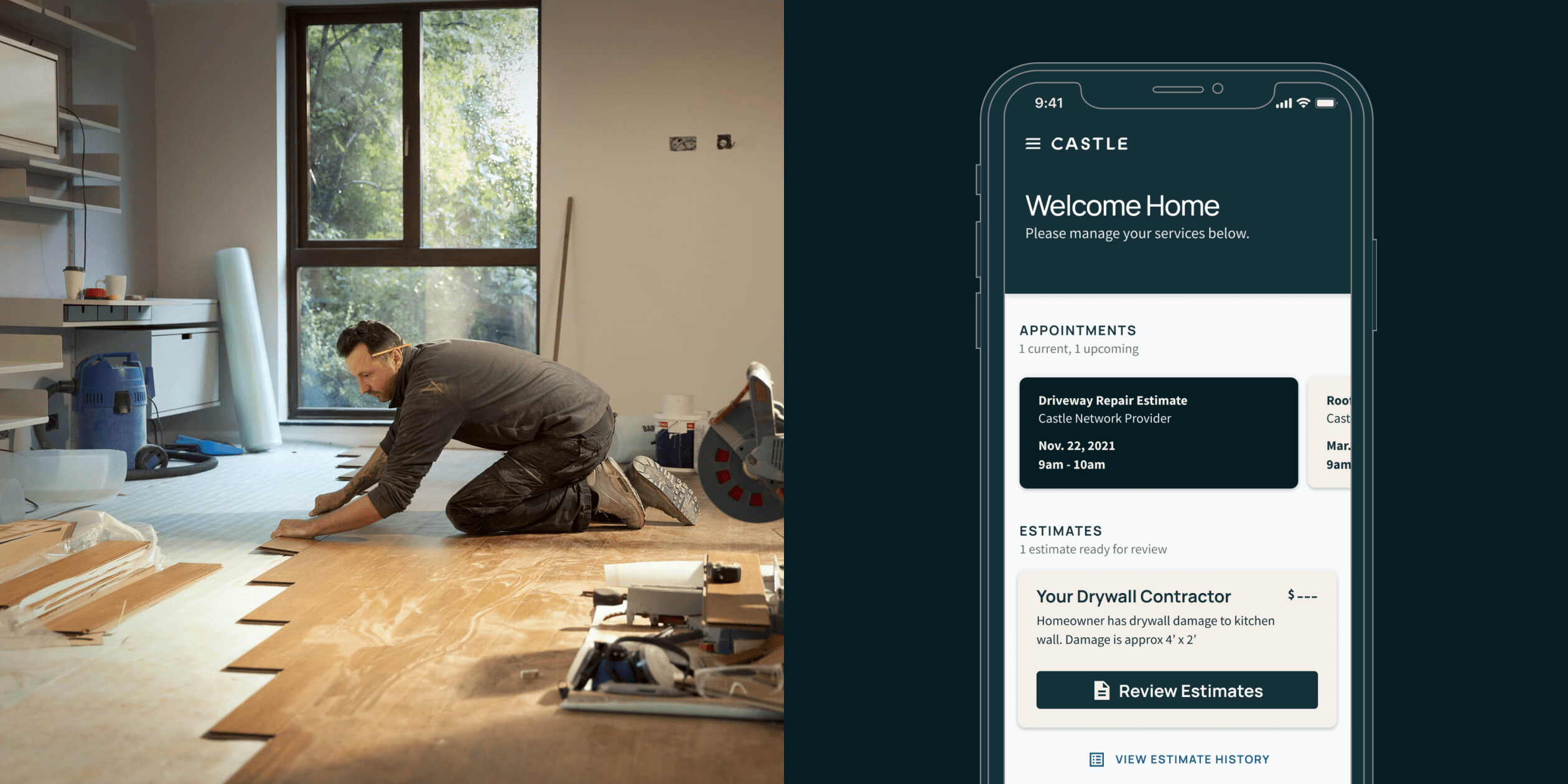

Progressive

Launching a new home repair venture

Progressive partnered with frog design to launch Castle, a new business targeted at improving the home services experience for both homeowners and contractors. In less than a year, we helped define the product, develop it, and pilot it with a limited launch in Florida and Texas.

Our team of designers and engineers at frog collaborated with an internal Progressive product team to build an ecosystem of applications for coordinating tasks between call center agents, customers, and contractors.

I led design during the project's implementation phase, translating loose wireframes into production-ready design specifications and detailed flows. We worked with developers, product managers, and QA to ship the pilot version of Castle available today.

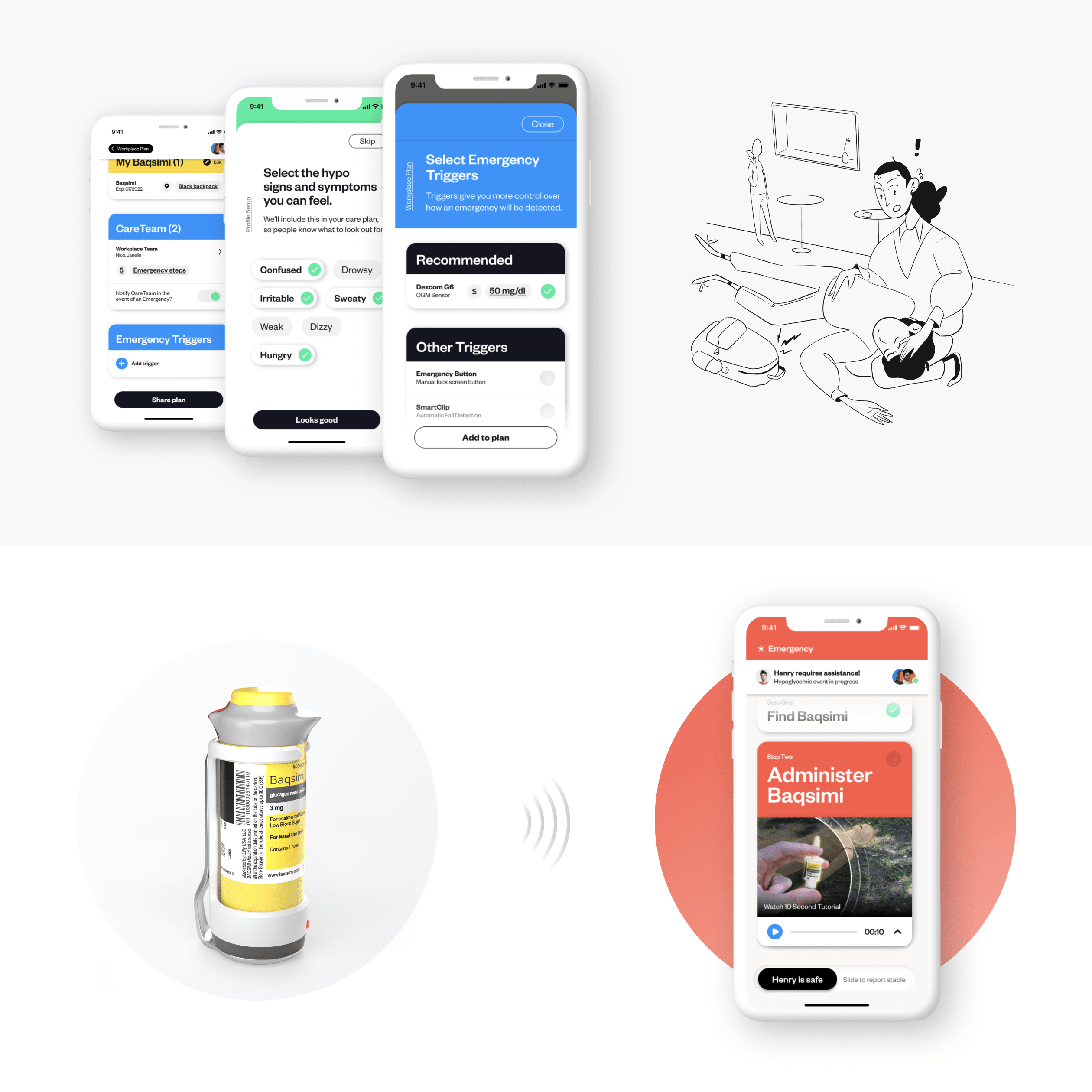

Eli Lilly

Improving the hypoglycemia rescue experience

Our team at frog design was asked to redesign the experience of rescuing people with diabetes (PWDs) from severe low blood sugar episodes. Such episodes are very dangerous because PWDs become unconscious, requiring someone else to administer rescue medication.

Lilly's new rescue med Baqsimi is easier to deliver than others, but educating people about it beforehand and locating it during an emergency were challenges. To address this, our team designed a connected device that clips onto Baqsimi and pairs with a companion app.

Before emergencies, the app encourages PWDs to establish a community of care and shares resources about severe lows with the group. When an emergency is detected, the care team's notified and the device helps rescuers locate Baqsimi with vibration and a blinking light.

Runway

Superpowering creators with machine learning

I joined Runway when the mission was still to make machine learning capabilities more accessible to folks with no coding experience. Since then the team has pivoted to video tools.

During my time there, I led design and implementation of onboarding features, coded a series of RunwayML Experiments targeted at showcasing the platform's power (see below), and created the experience for integrations.

I also nudged the team toward design best practices by standardizing a component library and establishing a weekly cadence of user interviews.

Personal Experiments

Playing with machine learning capabilities

I'm really excited about machine learning and how it can be used to augment instead of automate. Using tools like ml5.js and tensorflow.js, I've built a variety of experiments that explore both expressive and utility-focused possibilities for ML.

Some of my favorite projects include a squat counter that analyzes your form, a simple machine for matching drawings with emojis (based on Mike Matas' prototype The Brain), and a functional proof-of-concept of a UI for chaining the inputs and outputs of different models together.

These things are easier to understand when you see them, so I've included some demo videos below.

AT&T

Streamlining consumer identity

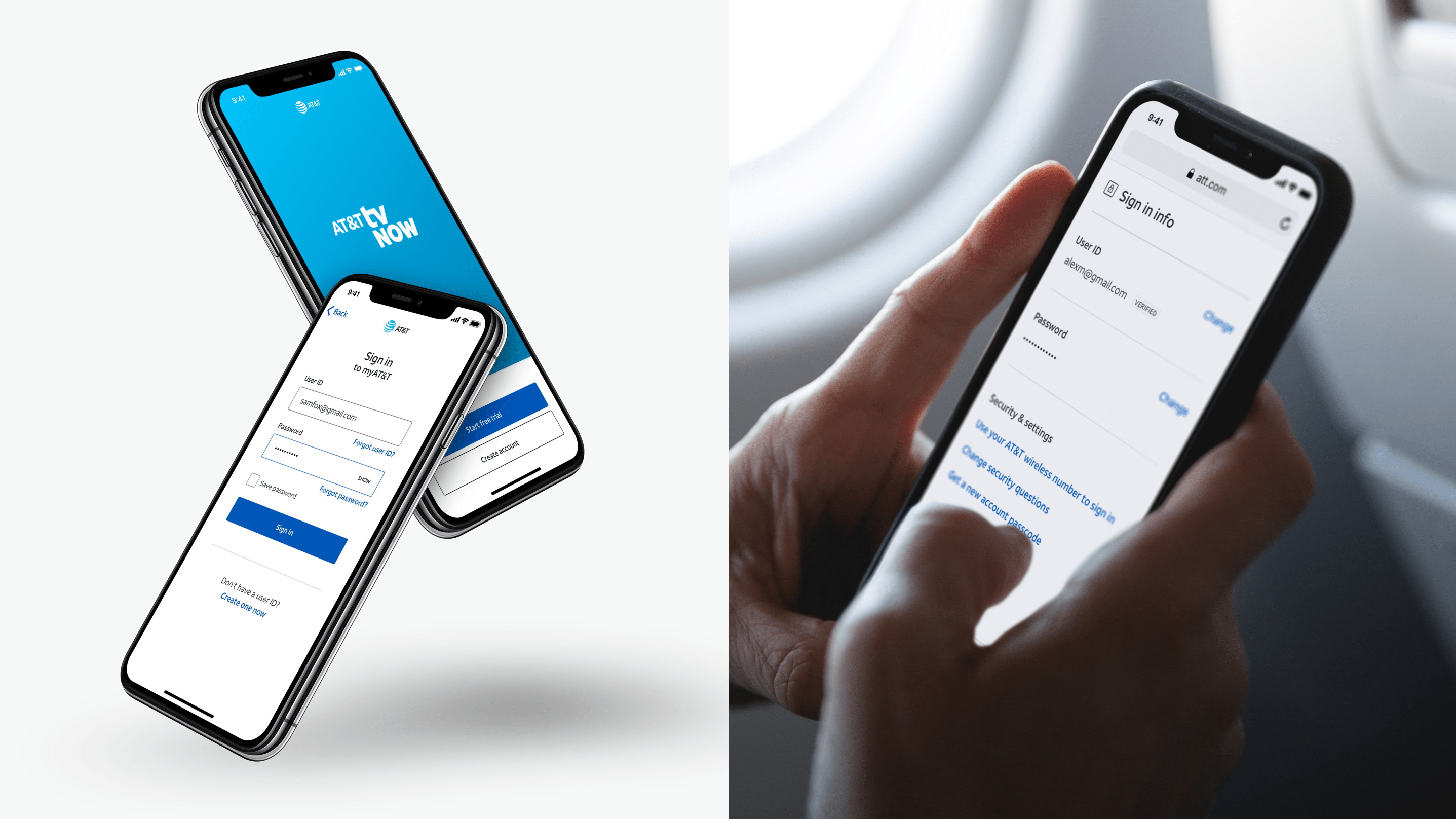

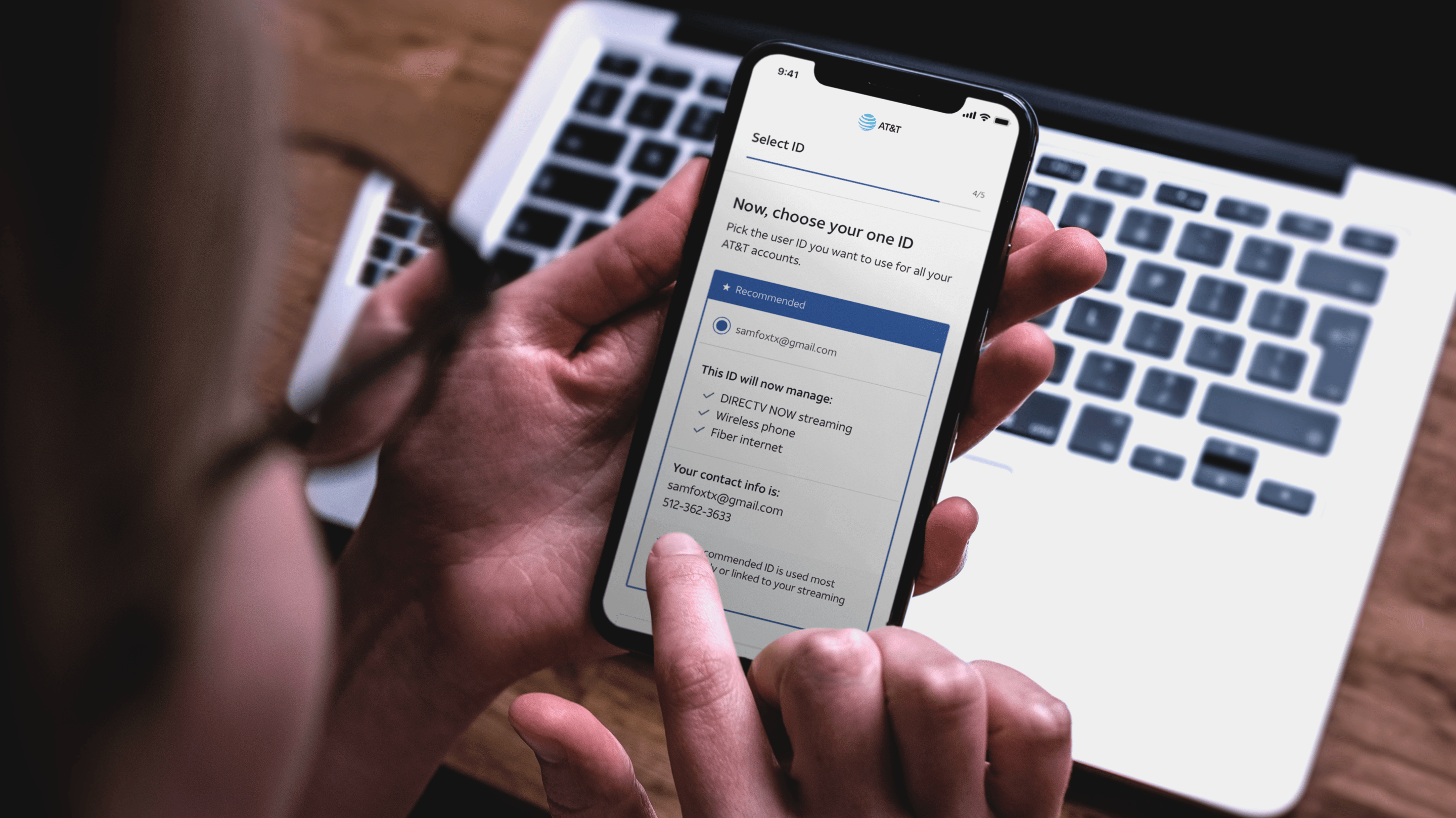

AT&T faced a big challenge following the Time Warner merger: many customers subscribed to multiple services, but each service required a separate account because of legacy systems.

I led an internal team of 8 designers, researchers, and content strategists in creating a unified authentication and identity management experience across all AT&T properities. To do this, we partnered with many parts of the business on a strategy for merging accounts and standardizing UI.

In the process, we helped develop a new design language for all AT&T products and integrated tech that uses network data to shortcut password authentication. The new experience is in the process of being rolled out.

AT&T

Helping support agents make decisions

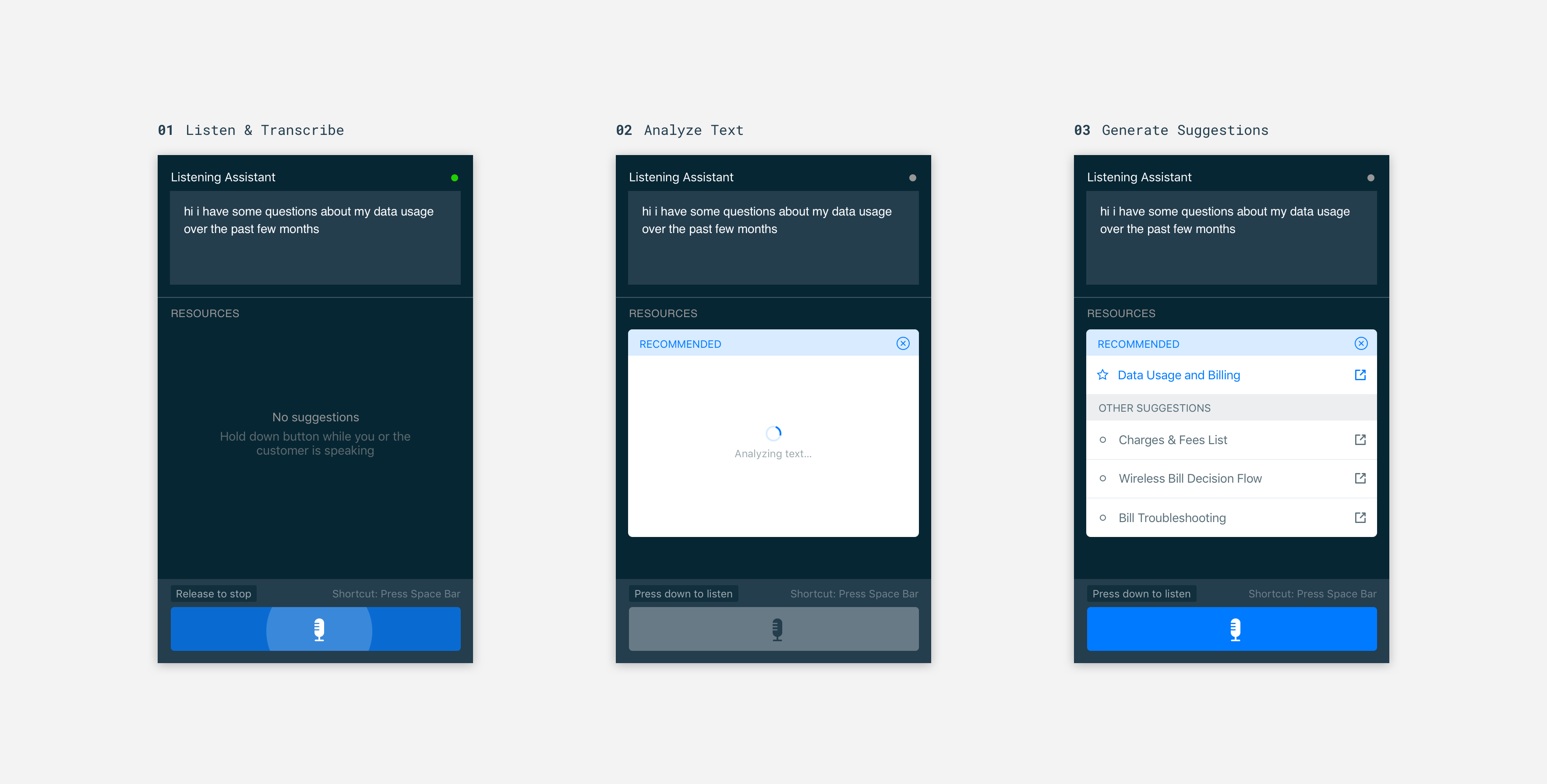

During a project centered around new search capabilities at AT&T, my boss and I had the idea to augment call center agents with an assistant that listens in on calls, determines the customer's intent, and presents the agent with suggestions for their next step.

After building a prototype that was convincing enough to secure executive support, I worked with a team of engineers to develop a functional proof-of-concept.

Most of our effort went into analyzing call records to identify common intents and training machine learning models based on those intents. A recording of the final demo is below.

Big Tomorrow

Envisioning an AI-first student experience

A lot of thought has gone into the future of higher education, but how students interact with institutions gets less attention.

While working at Big Tomorrow, a design consultancy focused on education and healthcare, several colleagues and I had the opportunity to explore how emerging technologies might shape the student experience moving forward.

This work was intended to inform future engagements with clients like the University of Texas and Harvard Business School. It also allowed us to articulate some ideas about Contextual User Interfaces in a case study.

Defining Portal's interaction model

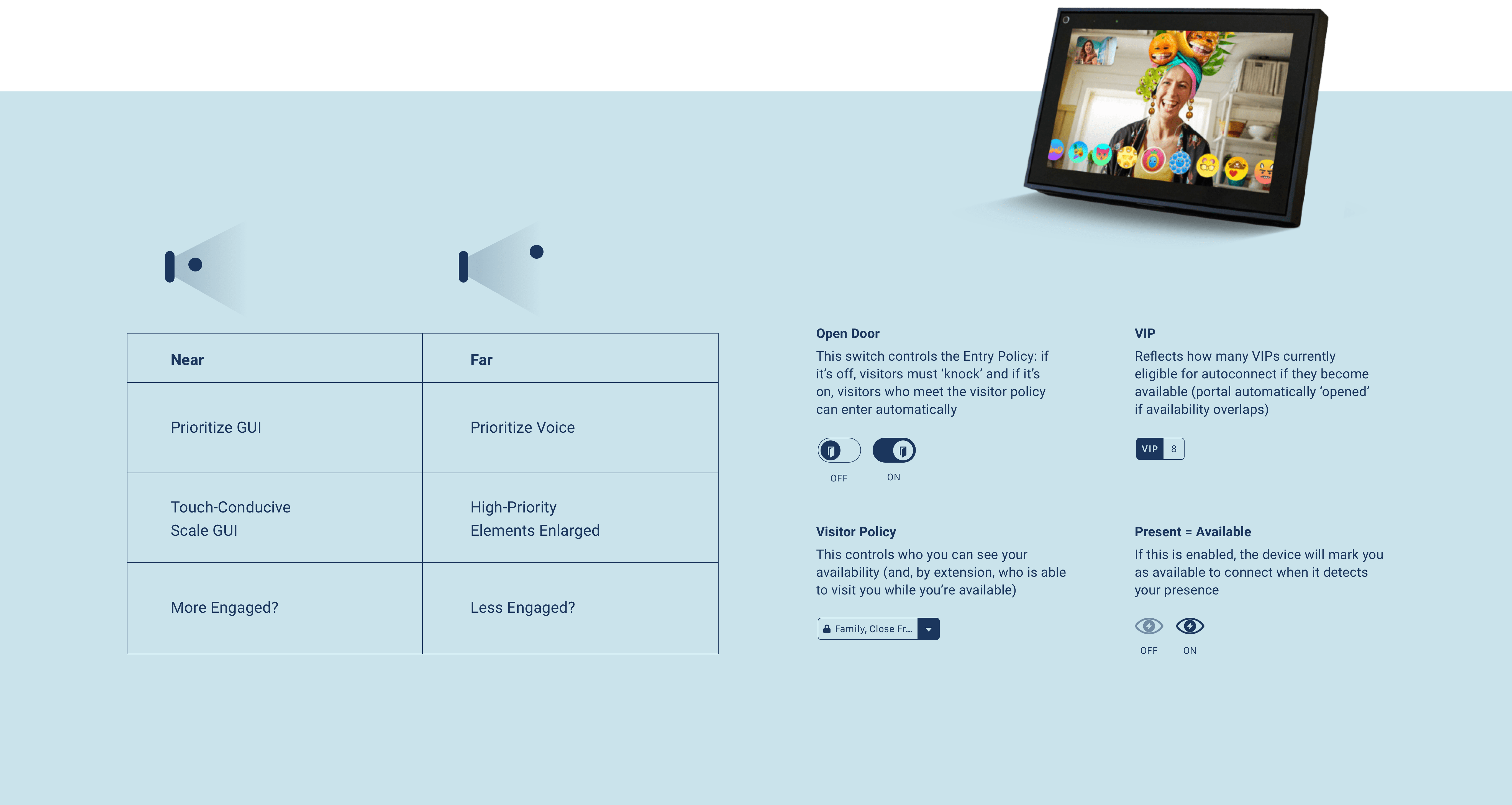

I joined a small team at IDEO tasked with developing interaction concepts and prototypes for Facebook's new telepresence device. Most of our work centered on two things: (1) an interaction model that shifted between GUI and voice, and (2) initiating visits without explicitly calling someone.

This project was completed while Facebook was reprioritizing privacy. Though our emphasis was on giving people maximum control over their visitor policy, some of the ideas would have required face tracking and other explicit signals of availability to work. As such, they didn't make into the final product.

Tidepool

Prototyping glucose trends with real data

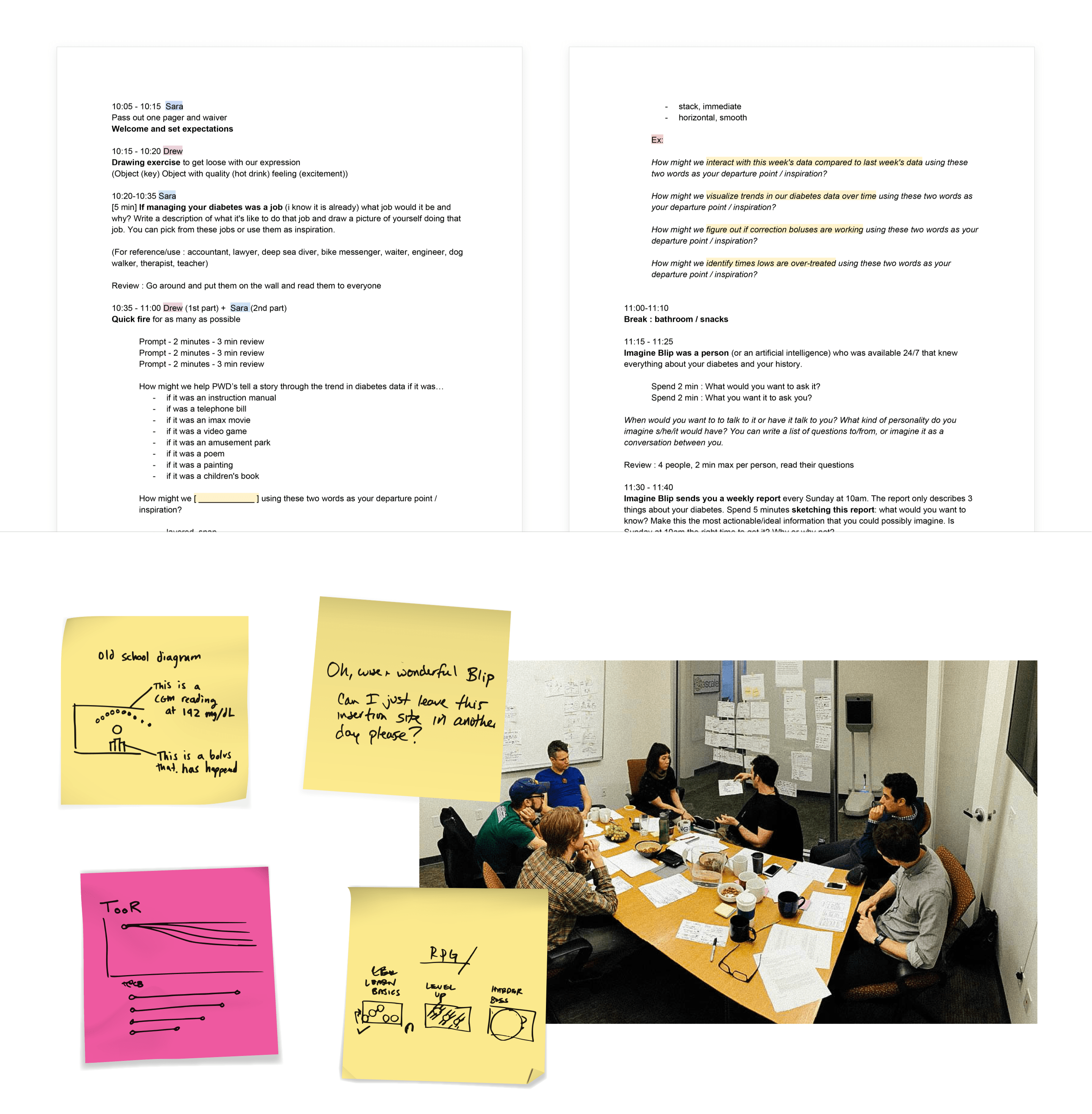

Tidepool is a non-profit, open-source effort to reduce the burden of managing Type 1 diabetes. Blip, the cornerstone of their software suite, serves as a hub for all a person with diabetes' data. I was invited to help their design lead craft a vision for how to analyze and present trends.

The team had been having issues getting meaningful feedback with prototypes that used fake visualizations and dummy data. I helped them create high fidelity prototypes with real data in a short time. These got better feedback, giving the team higher directional confidence .

DIRECTV

Exploring directions for a new streaming service

This was another project completed freelancing for IDEO. Our team crafted a vision for DIRECTV's new streaming service.

We investigated a variety of interaction models and concepts with high fidelity prototypes. This approach made it easier to test with users and communicate ideas in large reviews.

Some of the key themes we pushed were bringing the magic of live into an on-demand experience, evolving discovery into a personalized channel just for you, and making your mobile device a companion to the 10 foot experience.

Bellow

Making better phone calls

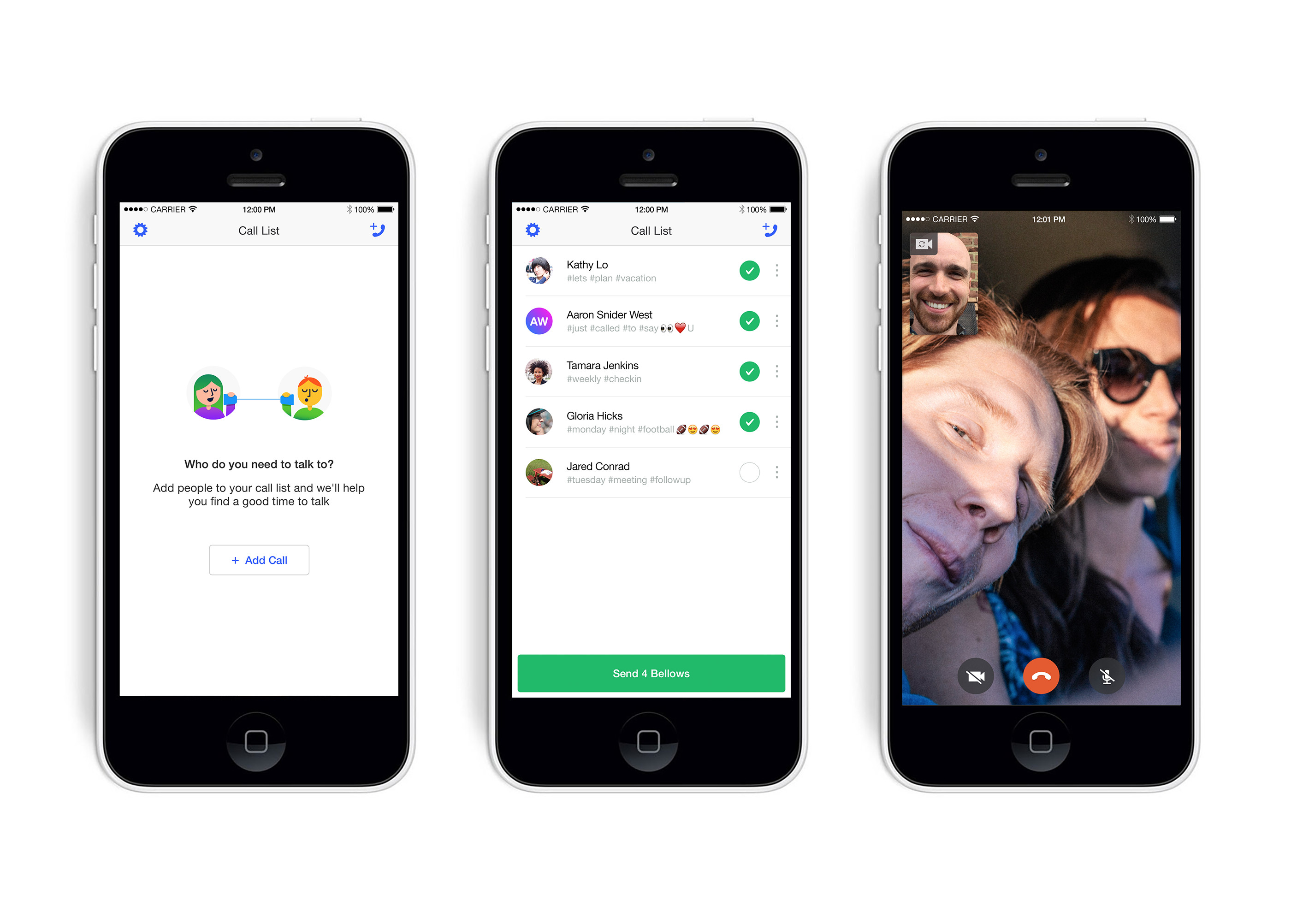

Text messaging is great for certain kinds of communication, but it's a poor substitute for presence. Voice and video calls are better, but why is it so hard to pick up the phone?

With Bellow, two colleagues and I built an app that tried to address the social friction the current call model encourages. We replaced interruption and anxiety with a system focused on mutual availability and control.

Runkeeper (now ASICS Digital)

Designing for fitness tracking

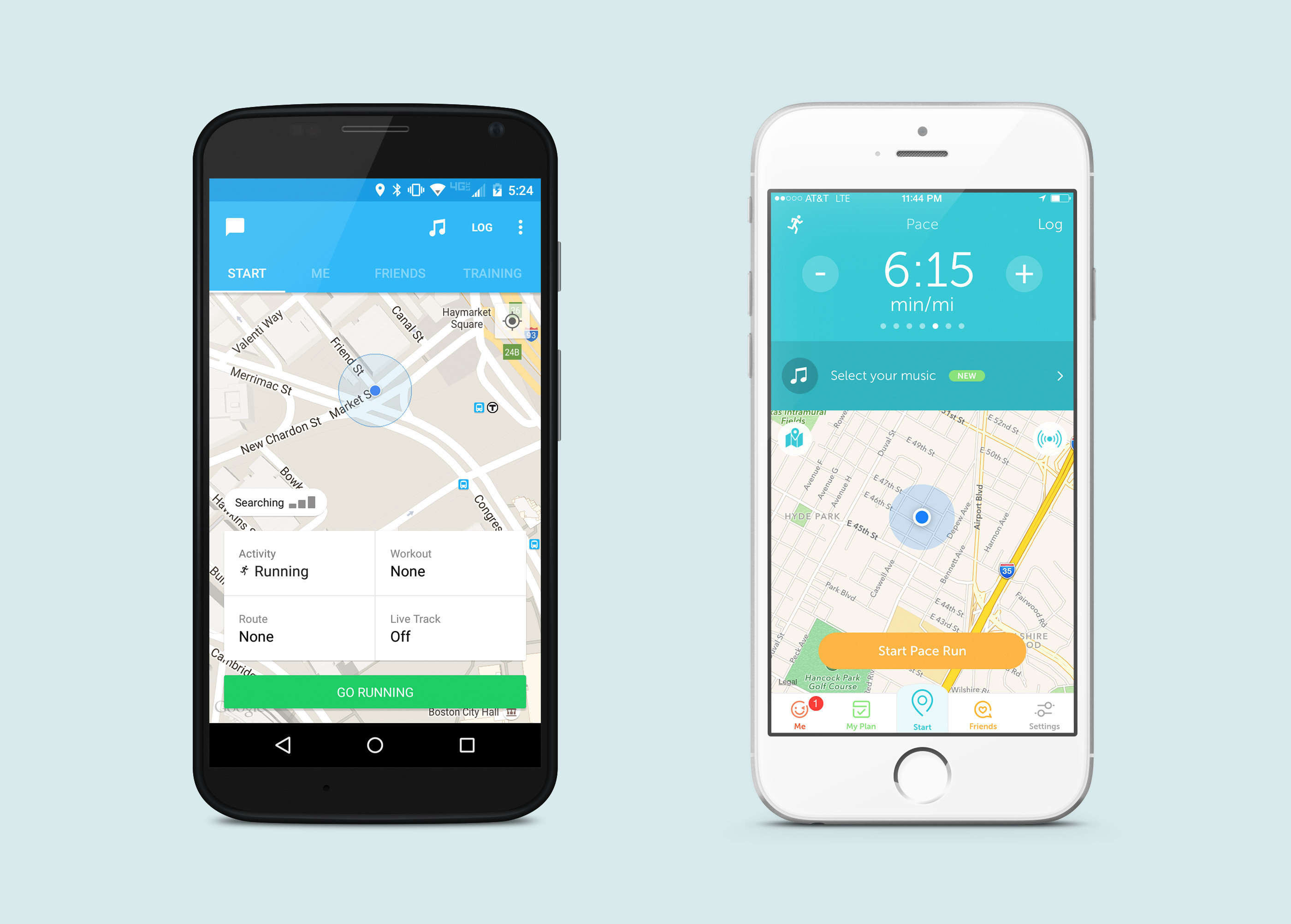

Runkeeper is one of the world's largest digital fitness platforms with over 45 million users spread across 192 countries. At different points during my two years there, I led design for Growth, Elite (paid offering), and Brand Partnership teams.

During the company's march towards profitability, I had the opportunity to work on everything from generative training programs to brand-powered reward systems. ASICS acquired Runkeeper in 2016.

Agilent

Upgrading science lab notebooks

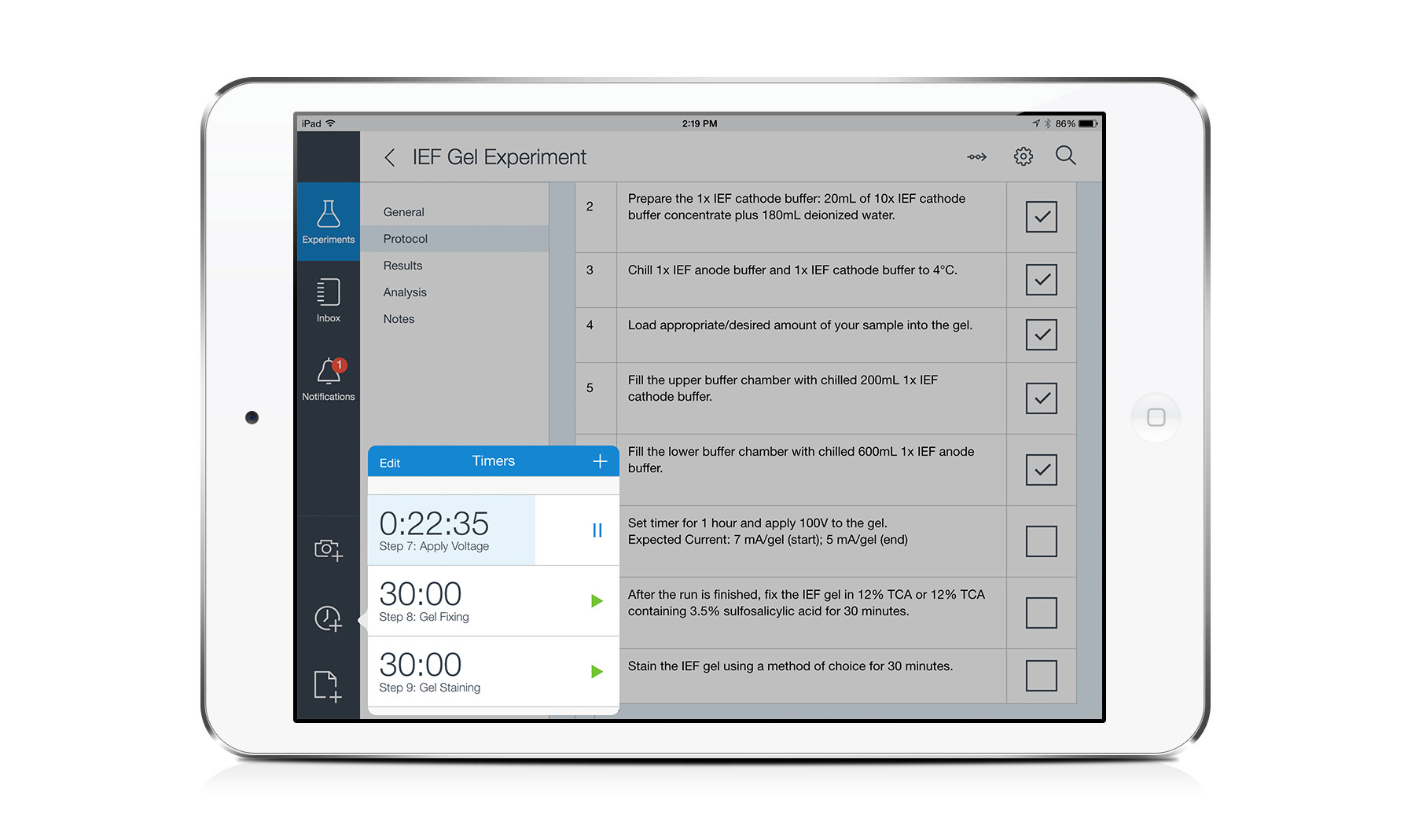

Agilent's Electronic Notebook (ELN) was developed to help scientists better capture, index, and mine their work. But an overly complex UI and desktop focus meant that it was challenging to use within its intended lab context.

Together with a small team, I helped define, design, and implement a tablet version of the ELN suited to the specific needs of lab workers.

Copenhagen Institute of Interaction Design

Collaborating on awesome stuff at graduate school

I attended the Copenhagen Institute of Interaction Design where we prototyped hardware, software, and services together as a class. Projects I'm most proud of include a modular set of audio controls that live on your fridge, an exploration into how emerging technology might influence our experience of death, and an extra energy efficient lamp named Fickle.

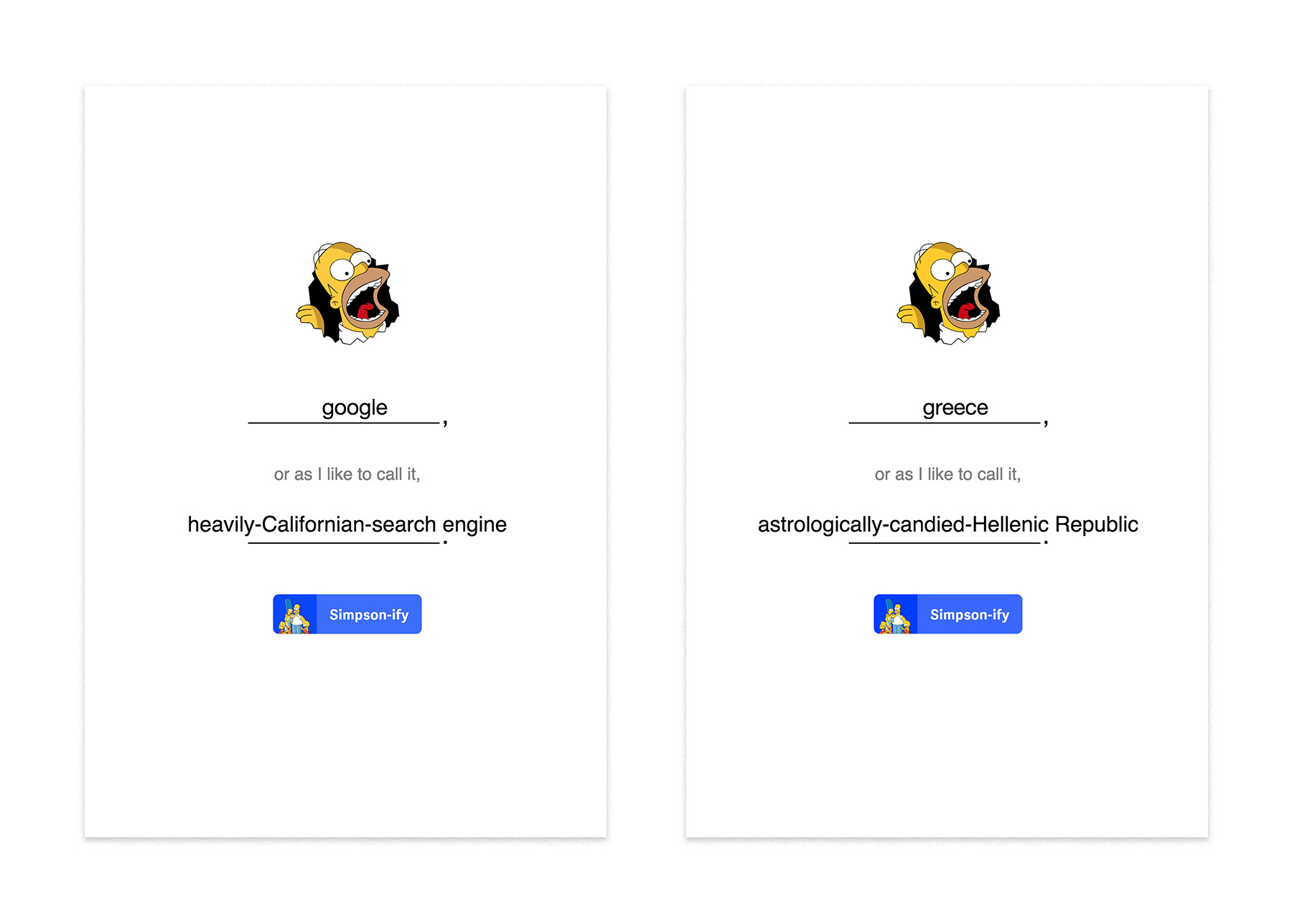

Simpsonify

Translating words into Simpsons-speak

A colleague wondered aloud about how much work it would take to develop a simple web app that could translate a user-defined keyword into Simpsons-speak. I spent an afternoon prototyping it for fun using Framer.js, a library of made up Simpsons words, and the Big Huge Thesaurus API.

Search Party

Prototyping an interactive music video

Lots of talk about what VR means for gaming, but there's also interesting cinematic opportunities. Search Party is a quick demo of a choose-your-own-adventure style music video.

The basic concept is that you start out hearing a song in the distance, and then can either move towards or away from it. This mechanic allows the experience to become a bit of a maze, where the only cues for reaching the music's source (i.e. solving the maze) are auditory.

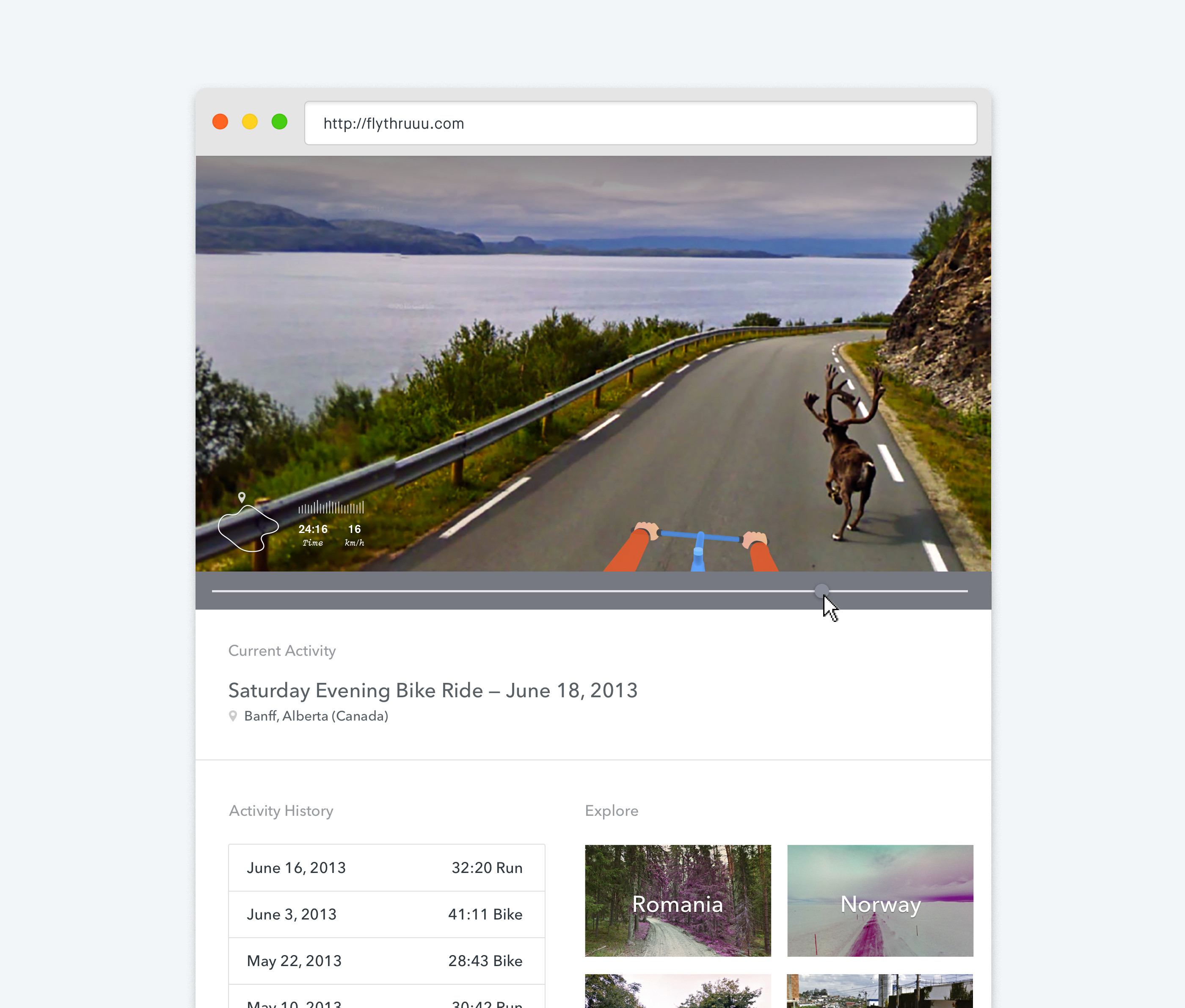

Runkeeper Flythru

Prototyping a more engaging workout summary

During a hackathon at Runkeeper, I teamed up with the company's CTO Joe Bondi to compose little GIF summaries of tracked runs and bike rides. The idea was to combine GPS traces, Google Streetview timelapses, and animations layered in based on activity-specific triggers. We prototyped a proof-of-concept that automatically generated timelapses and then rendered them out as GIFs.